Flying High

Cirrus Logic was built upon a small company founded by a former MIT professor Patil. At the university Patil had developed a microchip-level software system for controlling computer hard disk drives that he called Strategic/Logic Array. These systems improved hard drive function management and Patil Systems was established to market the new product. After three years of struggle Patil contacted Hackworth, back then in Signetics. And it was Hackworth who seen the possibilities of S/LA system to accelerate design of a wide range of highly specialized semiconductors. All the development process required was a systems engineer who could program and arrange the chips to handle whatever function the product should perform. Hackworth joined Patil Systems as CEO of new company named after cirruses, the highest clouds in the sky, as a way of expressing the elevated complexity of its products. S/LA system allowed Cirrus Logic to exploit new markets as they emerged. Without own fabrication open foundries were used. Cirrus took advantage of PC boom and finally succeeded with own hard drive controller, first to be mounted inside the drive mechanism. Then came time for more products- graphics included. IBM unveiled it's VGA standard in 1987 and chip makers were in new race that Cirrus won, producing the first fully compatible VGA controller microchip. Cirrus Logic went public in 1989 and accelerated growth through acquisitions among which were Pixel Semiconductor and Acumos. By 1993 Cirrus became major player in many areas of incoming digital future. Lack of own fabrication seemed to stand in a way of further expansion. Flexible yet fabless way was to at least buy long term foundry capacity. In 1994 Cirrus Logic took a first tentative step toward own fabrication capacity by investing into a joint venture with IBM named MiCRUS. A year later came second joint fabrication venture with Lucent named Cirent. A new product line should have helped fill those capacities- 3d accelerators. Cirrus got a jump start into 3D technology by acquiring patents and several engineers of Austek, company which has just developed A1060 OpenGL accelerator. Cirrus also cooperated with Argonaut on an API for their 3D products. Everything seemed to be in place for the first 3D accelerator, and Cirrus promised shipping by the end of 1994. That was chipset Mondello, a GD5470 3D part with separate 2D and ramdac chips. It should have been VL-Bus card with 96 bit VRAM interface. I don't know why exactly Mondello wasn't finished, but price would probably be quite high and therefore aimed at the professional market. The execution was poor on some other products as well and Cirrus Logic had to go through consolidation after rapid expansion. In April 1996 Cirrus has licensed the 3D portion of 3DO's M2 technology, a 64-bit graphics acceleration built for new console. But Panasonic in the end decided not to deliver second 3DO to the market. The goal of Cirrus was to create a high-end card by integrating 3DO's technology into own architecture. M2, on paper at least, was no joke with half million polygons triangle setup and 100 million pixels per second fillrate. Cirrus set on to adapt M2 technology for PC platform and Direct3D API. Since the beginning of 1995 Cirrus had a license for RAMBUS, which was back then offering 10 times higher performance than DRAM and for some time also lower prices. In the summer CL-GD5462 was first video accelerator to take advantage of 500 MHz RDRAM. Named Laguna, it started a product family utilizing Rambus memory. By the end of 1996 Laguna3D was ready for mass production. But were the specifications like the M2?

Laguna3D

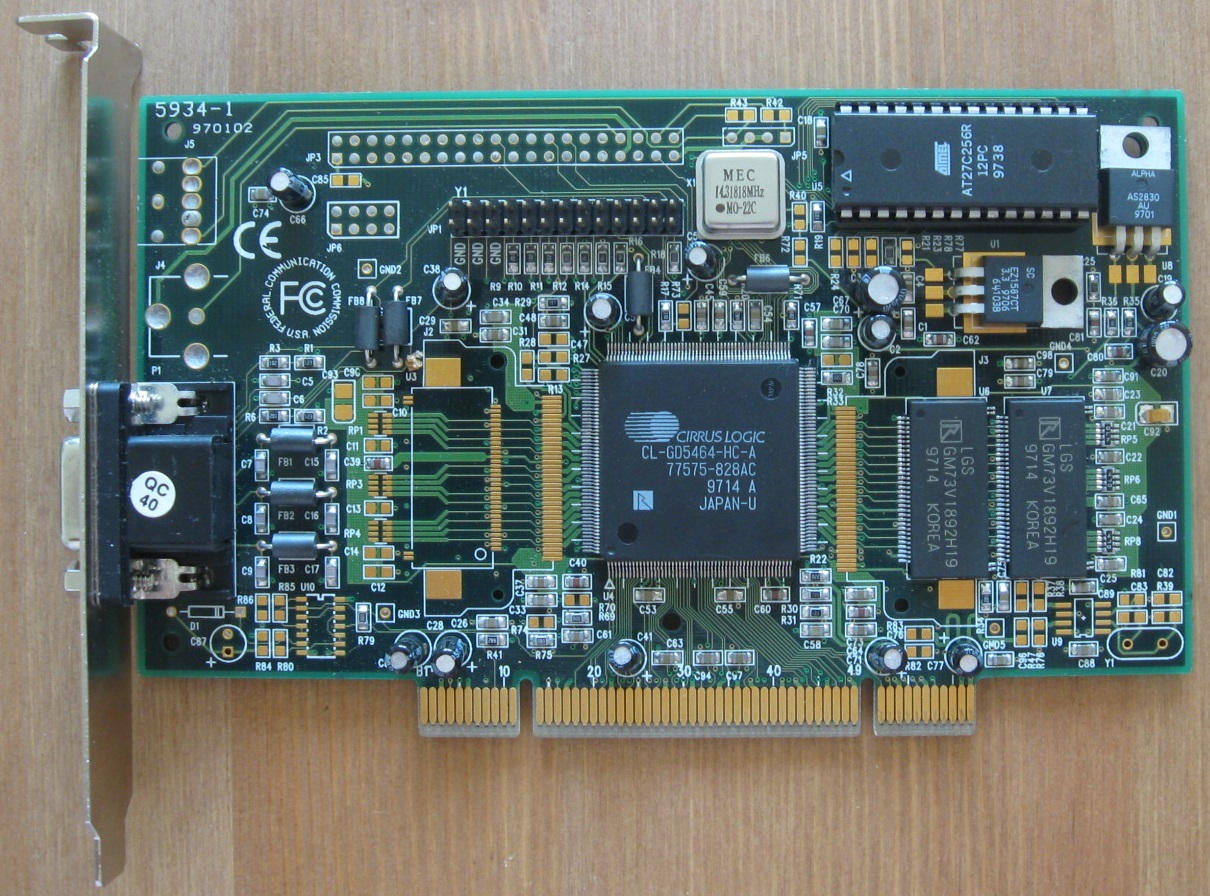

First available 3d chip from Cirrus was numbered CL-GD5464.

During 1997 updated the chip into CL-GD5465. Between these chips there were more changes internally than Cirrus would admit. And even the 5465 itself had more than one revision.

Here is 5465 based, cheap looking Prolink card with single 2 MB memory chip

Finally, 4 MB board from MSI, followed by the highest clocked Chaintech card

Beside obvious bus change, the AGP cards, or at least the memory, can be clocked faster. The only other obvious improvement is fixed bilinear filter, which I will get to later. The lack of major differences between 5464 and 5465 puts execution of Cirrus into question. Could they just stay idle while waiting for Intel 440LX AGP chipset release in August 1997? Intel and Microsoft were very fond of Cirrus and together put on a show in March Meltdown '97 starring Laguna3D-AGP as the industry's first hardware utilizing Intel's Accelerated Graphics Port to texture from system memory. No other 3d graphics provider got such an endorsement from mighty Wintel. In March Laguna3D PCI finally arrived to market in the form of Creative's Graphics Blaster. Creative once again bought rights for early release, and more interestingly, they announced their Laguna3D will be compatible with CGL. This would make it compatible with 3D Blaster library, however tests showed otherwise (thanks Gona). Initial promotion price of hundred bucks should be a sign that Laguna3D is not so hot performer. All cores were manufactured in 350 um MiCRUS fab. Members of Laguna family are utilizing single channel RDRAM with bandwidth up to 600 MB per second. Up to 4 way interleaving is possible. Laguna3D has pins for second channel already reserved, to maintain the same board layout with future planned products using two channels to double the memory bandwidth. Boards from that time have already place for RDRAM on the second channel, ready for the pin compatible Laguna3D follower. Both 2 and 4 MB variants are common. Up to 8 MB of local memory are supported and Techworks planned to release 6 and 8 MB cards, but I don't think that ever happened. Last chip revision C was for motherboard integration. My slower AGP cards are overclockable by some 25-30%, chip is still quite cool and performance scales linearly.

Technology

Laguna3D takes the proven 5462 2D core, integrates faster 230MHz DAC with 8-bit palette LUT, and of course adds 3d module. That is standard rasterizer of the time, sitting between FIFOs and feeds from own 16 kB of registers. It takes care of common rendering functions such as Gouraud shading, perspective correction (ahem), Z-buffering, texture map filtering (well ...), fogging (uh). Texturing unit works in paraller with polygon engine, available formats include 8880 RGBA up to 512x512 pixels big. Cirrus was especially fond of it's texturing engine, calling it TextureJet architecture. It is a hardware texture manager and sophisticated PCI/AGP bus mastering scheme relieving CPU from texture transfers between system and local video memory. Our processors would appreciate more something like triangle setup, but at least there is something unique inside Laguna3D. The texture manager is supported with 1 kilobyte texture cache and on-chip address translation table that tracks, via dynamic random accessing, all the memory locations of textures being used. These features helped Cirrus to become the promoter of Intel's AGP model, although 5465 uses only basic 66 MHz bus speed. It survives on the fly switch to 133 MHz, but 3d games then quickly freezed PC. Pixels are tested by masks and depth comparison performed with help by cache, before lighting and blending is processed. All basic blending modes are supported except one- multiplicative blending. That was one big nail into Laguna3D's coffin, because with advancing time game developers were using it more and more. Overall Laguna3D claimed performance of more than 50 million "perspective-corrected" textured pixels per second. 32 bit output is supported even for 3d rendering and Laguna is internally at least partially operating in true color. Rambus memory controller with one byte width should support up to 667 MHz data rate, but boards I've seen are only 600 MHz or even a bit less. Clock of Rambus controller is half of data rate, which means own domain ticking at frequency of 229-315 MHz. I could not find a way how to read chip clock, but 1/4 of memory clock appears to be safe assumption.

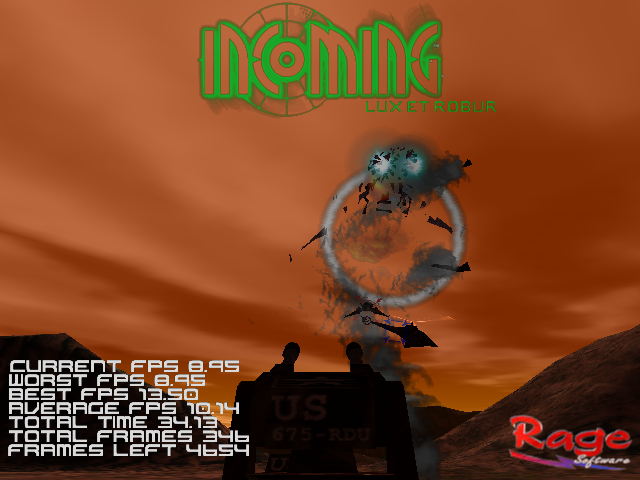

Incoming in 32 bit mode, no sign of dithering, but broken lens flares still ruin the show.

Incoming in 32 bit mode, no sign of dithering, but broken lens flares still ruin the show.

Experience

First big complaint about image quality is broken texture perspective correction. Just like with ATI's Rage II Cirrus has a "quality" setting to set the precision. Default setting is quite high, but still some large flat surfaces have problems, for example you may see shaking ground in Mechwarrior 2. Setting the lowest value gives Laguna a speed increase, but perspectives are getting really fuzzy. However highest setting is only marginally slower than default, but still does not provide perfect correction. Since most of other accelerators I am comparing don't have such problems I used the highest quality setting for my tests to get a more leveled conditions. For demonstration here are screens from Shadows of the Empire:

First lowest quality, then default and finally highest.

First lowest quality, then default and finally highest.

There are more interesting settings to play with in Laguna registry, though they seem mostly applicable only for AGP version of Laguna3D. TextureJet slider is one of those, but I could not get any relevant difference by changing its value. Then there is rather heavy dithering to 16 bit colors. It is nice when accelerator uses higher internal precision, but dither pattern employed by Laguna is far from accurate and several overlapping layers of transparent textures can deliver awful almost stipple-like pattern. 32 bit color mode can be a saver, but speed may slow down by some 20%. The CL-GD5464 chip ought to be disappoing, because bilinear texture filter is one of the cheapest possible implementation. Laguna3D PCI merely once calculates interpolated color between pixels and uses that, effectively doubling their amount. Better than nothing, but magnified textures will still be blocky. Promised fogging is failing across game suit. There was no sign of using system memory, in fact Laguna3D PCI 4MB failed to run several games due to insufficient memory capacity. Laguna3D AGP does take advantage of aperture and remains pretty compatible even with 2MB of memory. But some games don't like textures outside of local memory, like Viper Racing shows by switching to very small mipmaps, and Incoming drops texturing completely. Then there are games partially giving up on texturing, likely because of failure in lightmap phase, those are using or originating from Quake engine, like Half Life and Thief. World texturing does not work in those, promise of single lighting pass from spec sheets is really only for basic techniques. Unreal just skips lightmaps alone and Laguna is useless for other effects as well. Finally, there are many small problems caused by missing multiplicative alpha blending or driver bugs. With 2 MB sky in CartX Racing is not rendered, leaving lots of garbage on the screen and with 4 MB all textures go bananas. Smoke in Carmageddon is not bilineary filtered and car shadow flickers, shadows in Expendable are drawn on top, status bar in Formula 1 is broken. Space dust and engine trails in Homeworld are opaque and single color. Reflection mapping in Monster Truck Racing 2 brakes car texturing. Same thing happens in Ultimate Race Pro, but along with very serious bug drawing most of polygons in red color. Battlezone and Interstate 76 demo reject the card outright. Compatibility with newer game should be minimal, NHL '99 and Need for Speed 3 cannot be rendered properly. It may also be the reason for no OpenGL games support. On top of that Laguna3D 2 MB froze the test rig few times and only the 4 MB card can safely complete Falcon 4.0 test.

For more screenshots see the Laguna3D gallery.

For more screenshots see the Laguna3D gallery.

Jet speed?

I chose ATI's Rage IIc for comparison. It was in similar market segment, and suffered from it's own blending and perspective correction problems. If Laguna3D could draw full featured 3d pixel in single cycle as Cirrus claimed, this should not be a contest, but as you can see it rarely happens. Even if execution units are designed to process multiple operations in single pass, their inputs cannot be fed fast enough.

In average framerate 5464 based PCI card is the looser, delivering 81% speed of Laguna3D AGP 4MB. In between those Cirrus is making a point for AGP. CL-GD5465 with only two megabytes of local memory performs just like better equipped Rage IIc. Minimal framerates are weaker, Laguna3D AGP needs four megabytes to barely edge the Rage IIc. The other two are trailing it by some 10%.

So much for cards below the planned maximum clock by some 20 %. The performance impact of the frequency is quite linear. Here are the results for Laguna 3D driving it's memory at top speed of 600 MHz:

In comparison with the fast variant of first Permedia, this Laguna pulls clearly ahead. 15 % at average and whopping one third better minimal framerates.

Cirrus abandons PC graphics

There was little excitement around Laguna upon release and it remained one of the lesser known cards of that time. Performance, feature set, drivers, image quality, just in all aspects I consider this average at best. The first 5464 chip obviously did not come in expected shape. The AGP version would then be also a bug fixing effort. Taking price into account it performs quite well in old games even with small amount of local memory. After the last card I would say Cirrus did a fine job on the 5465, it's performance is respectable in this price range. But something extra was missing to become gamers choice. There is a suspicous lack of reviews, as if Cirrus themselves stopped believing in their graphics products. Laguna3D turned into problem child, which was sold mostly for discount prices. And Cirrus Logic as a whole was bleeding. During the latter half of the 1990s the poor investments, slow product development and overcapacity in fabrication ventures rained havoc on the company. 1998 was a year of further workforce cuts and divest of graphics along with modems and advanced systems products. In 1999, Hackworth stepped down and Cirrus went through continues changes. Fabrication partnerships with both IBM and Lucent were ended. Hackworth stood behind his fab investments because Cirrus in its peak had to redesign multiple times for several manufacturers to meet customers demand. So there are different opinions whether the company was mismanaged or victim of its own success. Anyway, focus then moved onto storage, audio chips and data acquisition technology used for communication and industrial applications. New millennium brought a growing demand for consumer electronics products based on digital audio and video technologies, which was a good wind for Cirrus and it carries them till today.