Vintage 3D

2024

December

Finally time to expand the 2nd generation coverage, Rage 128 VR review is up. I know this was a slow year, but becoming a father raised the difficulty somewhat.

June

Have you heard the good news? The Alliance AT3D is not that hopelessly incompatible 3d accelerator. After a complete retest the new findings are added to the original review.

February

A new review is up. Maybe the youngest card I will ever do. Read on PowerVR Neon 250, fresh from the second half of 1999.

2023

After a calm time with the G200 came a lot more difficult review. This S3 card was more complicated than any Virge: Savage3D review is up.

April

After the last fresh adventure I decided to enter safe waters and review well known G200 by Matrox. Then I got so bored I decided to shut this whole thing down. That's only 1st April, G200 review is up.

2022

I wanted to finish the year with something special, unique. Truly new information, like in good old times. Have you ever seen a review of SiS 300? Me neither. Lets fill another gap.

October

ATi Rage XL review is up.

August

The first step into the next generation is ... a review of one integrated graphics. Since the i740 was such a capable card and realizing the impossibility of testing discrete i752, I gave the integrated core a go. With the help of Graphics Performance Accelerator in the AGP slot, so there is that. A small step forward, but a needed one. The IGP was not extensively covered back in its day (not to mention the cancelled card) and I don't like such gaps.

March

The card bought is Neon 250. After a bit of software struggle got her up and runnning. Not having the Neon was my mental excuse from going into next generation. Slowly I am preparing. From some 70 tests I try on 1st gen, around 40 can be applicable for the next gen as well. Not all will work out in the end, but it is plenty of comparison points to have.

2021

December

You may notice small updates here and there. Main think to say is that I am spending (your) money. I ordered and eagerly await a newly made Neon 250. Not having that little bugger was my main excuse against investigations into that whole generation. All the donations so far covered but a small fraction of the cost, but thanks to all the donors! All the best in new year.

May

There is now a page for unrealized or unfinished gaming 3d endeavors of various companies from the 90's.

March

Galleries using Flash were replaced. People using old browsers can still access flash galleries by historical links.

2020

December

Finally forced myself to crunch numbers for Permedia NT 8 MB. Always one of the hardest cards to benchmark, it takes a while to arrive at any well-funded conclusion.

November

A mistake occurered. I don't know what happened back then, but I wrote in the ATI Rage Pro review that Rage XL made no 3d improvements. Regarding 3d architecture these chips (XL, Mobility P and probably XC) are almost the old Rage Pro. However, going through the cards now I see that transparent textures are finally bilineary filtered. Coupled with higher frequencies this makes the Rage XL distinctively better than the Rage Pro. Should I ever venture into the 1999 territory, you can expect full benchmark of such cards as well.

April

After lengthy consideration I decided to include PC-FXGA in the database. It is probably the only board targeting mainly developers rather than gamers, but by all other aspects it fits in. Well, one can see how it's "game" library is also questionable, but I can be a bit lenient when it comes to 1995 chips. Oh the hardware niches we no longer have today.

March

Recently I acquired another Laguna3D AGP card. Fortunately, not only to put her on a shelf. After few tests it became apparent this one is faster than any previous one, actually running it's RDRAM at 600 MHz. Frequency which Cirrus stated for very first Laguna3D, making my PCI card suspiciously underclocked. Whether it is unlucky coincidence or hint at bigger story behind the troubled Laguna3D remains to be seen. At any case the review is expanded with full benchmark of the new card!

Plus I am trying these fancy new web graphs. Sorry, retro browsers. How do they look?

2019

February

Pixel pipelines are back. In the database, you might notice a return of sorts to this once most basic parameter. I reconsidered development of rasterizers and decided to return to that good old definition- how many pixels they output per clock. Values should be recalculated for all chips featuring unified shaders.

2018

August

Thanks to Vogons member Hard1k I got my hands on first Mpact! card with video output.

Thanks to Vogons member Hard1k I got my hands on first Mpact! card with video output.

That means finally proper Vintage3d review of it's 3d acceleration.

March

My database have reached 600 SKUs. Now I will dive into Permedia NT.

January

I would like to share what was keeping me busy lately: finishing my very own video game, everyone with an Android phone can give it a try: Visit Mighty Square on Google Play It is but a small distraction, that may hopefully entertain few people. I will be thankful for any feedback. You can still help me make it better.

2017

August

Another super sampled anti-aliasing update is about the Intel i740 chip.

I haven't seem this one covered before.

April

The Riva 128 was the only chip of its time supporting super sampled anti-aliasing. A small segment on that anti-aliasing was added to her review.

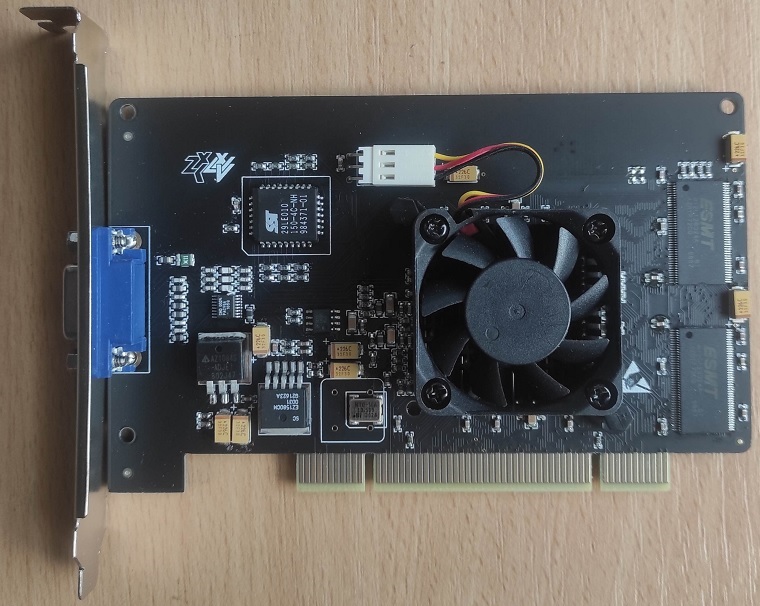

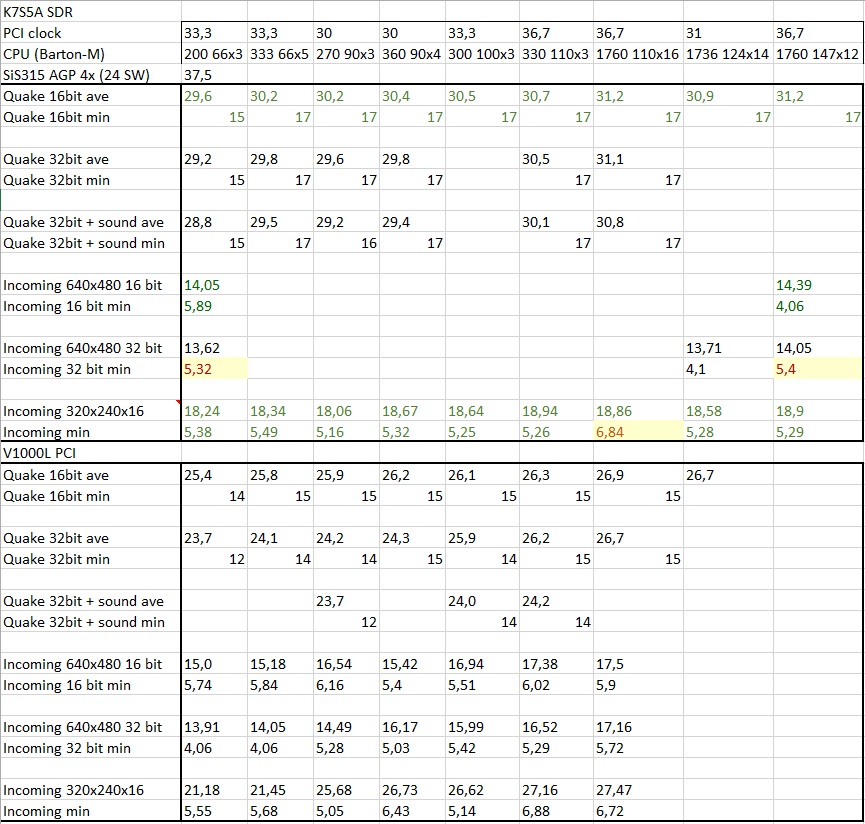

October

Tried more Trident and Vérité 2 drivers, some values were added or corrected. And then my motherboard died after all the abuse. A sign to end this? But I just acquired first Permedia, the IBM version. So for this test (perhaps last one) a K7S5A fine-tuned for similar performance was used. Thanks to that performance difference between IBM and the Texas Instruments version can be quantified- assuming my cards are good representatives. And my methods adequate, despite all efforts there are some strange inconsistencies remaining. But there are only a few empty fields left...

July

I played a bit with Super Socket 7 platform, looking into things like memory bandwidth dependancy of Intel 740 AGP. I tried to find out more about Laguna3D, tried every driver I could find to get more out of them, and broke into one of my spare cards to measure die size. Think I finally at least tighten the range of possible clocks and clarified distinctions between 5464 and 5465 in the review. After some time elaborating on (lack of) meaning of "pixel pipeline" today, I decided to change their count for unified shader architectures. The new number is guestimation of how many pixels shader arrays can work on at once, and it led to realistic pixel:texel ratios. It is a wild move, but old count has no meaning for current archs. And other candidates, like amount of rasterized pixels, would most of the time not say something specific about SKU either.

April Review of Starfighter PCI is up. Long story short, local texturing does not have to be always faster.

March

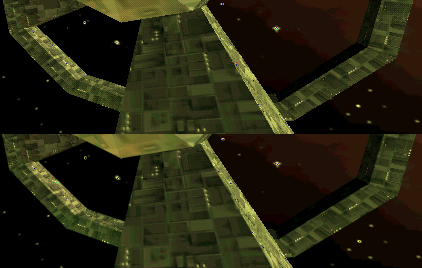

This month I investigated CPU and PCI bandwidth dependancy of PCX1. K7S5A with mobile Athlon and slowly timed SDR memory was used. It is a system too modern for PCX1, but with downclocking it seemed reasonable. As you can see the PCI bandwidth was hardly a factor there. Maybe with platform from proper time it would look different.

And 1024x768 jpeg Tomb Raider screenshot from Simon was added to PCX2 review, because even with his guidance I could not get screenshots to work.

This month I investigated CPU and PCI bandwidth dependancy of PCX1. K7S5A with mobile Athlon and slowly timed SDR memory was used. It is a system too modern for PCX1, but with downclocking it seemed reasonable. As you can see the PCI bandwidth was hardly a factor there. Maybe with platform from proper time it would look different.

And 1024x768 jpeg Tomb Raider screenshot from Simon was added to PCX2 review, because even with his guidance I could not get screenshots to work.

Also there is yet another new column in the database, called alternative clock. There will be value for aletrnative domain clock (mostly shader), or boost or base clocks. It depends on SKU obviously. I am going to review one more card in April, time to slay another false legend :)

February More updates in the database, most of triangle setup rates should be correct. Because I don't like empty cells, the geometry processing column will now also contain geometry shader engines versions and unit counts- they started to appear almost exactly with transition to universal shaders, so hopefully it won't cause confusion. After spending some more time with 3D Rage II cards, I am convinced they need three clocks to map one texel. First 3D Rage even more. Fixed my blunder with Midas 3, the card has only one megabyte of memory for textures and parameters. My bad.

January This month nothing retro, I was working on my database. Recently triangle setup rates became a bit interesting. I used these in one column with vertices transformation rates used for vertex shader era cards. Once unified shaders came, the numbers exploded and I stopped filling them. And now we finally have chips scaling triangle rates above one per clock (not necessarily rasterization). I wanted to put this in, so the values are finally divided into separate columns. As usual, the values are theoretical peak. In case of more options (like when some Geforce SKU has variable amount of GPCs) best case is used. For cards without setup engine, maximal triangle throughoutput will be used in that column. I certainly made mistakes when refilling them from top to bottom, but it will get fixed later. older news

Purpose of this website is to remember and find new information about the first generation of gaming 3D cards. What is first gen? Well, there are many definitions, common one would probably define three early generations of early 3d gaming chips: first geometry accelerators like Millennium or Imagine 128 II, then first texture mappers like Gaming Glint or Virge and finally "mature" architectures like Verite and Voodoo. I want to cover all of this range from very first accelerators (if possible) to anything released before Voodoo2. From there on 3d chipsets were having more and more comprehensive reviews and are therefore well known. I want to show barely tested chips in extensive collection of real games. The benchmark suite currently consists of around 40 games from 1996 to 1999 and 2-3 artificial benchmarks. Reviews are not done from user perspective. I am trying to examine and compare performance, which means no proprietary APIs. Cards are tested with latest or best available drivers and in a system saturating video accelerators with power unreachable at that time. In the future I might build a low end rig to examine performance in budget PC. The main purpose is to learn about chips, which were not reviewed extensively in ways we are used to now. In fact, information about gaming performance of most first generation cards is remembered almost only through word of mouth. I am not a professional hardware reviewer, neither graphics technology expert, but I will try to do my best to reveal real capabilities of vintage 3D cards.

My benchmarking practices are definitely not the best. I don't run the tests more times unless the results seem off. I simply don't have enough time. Also, most of the old boards lack the option to disable vsync despite trying various tweakers. Because of this I run all the tests with vsync, unless the application itself can disable it. This shouldn't be much of a problem with some high speed CRT, but I have only LCDs now. Therefore 75 Hz is used. Still the results have value, since vsync is almost always on by default and most of users don't change video settings. The performance corresponds to user experience, however speed differences between cards can be skewed.